Participating with the tech group is just not “a pleasant to have” sideline for defence policymakers – it’s “completely indispensable to have this group engaged from the outset within the design, improvement and use of the frameworks that can information the security and safety of AI methods and capabilities”, stated Gosia Loy, co-deputy head of the UN Institute for Disarmament Analysis (UNIDIR).

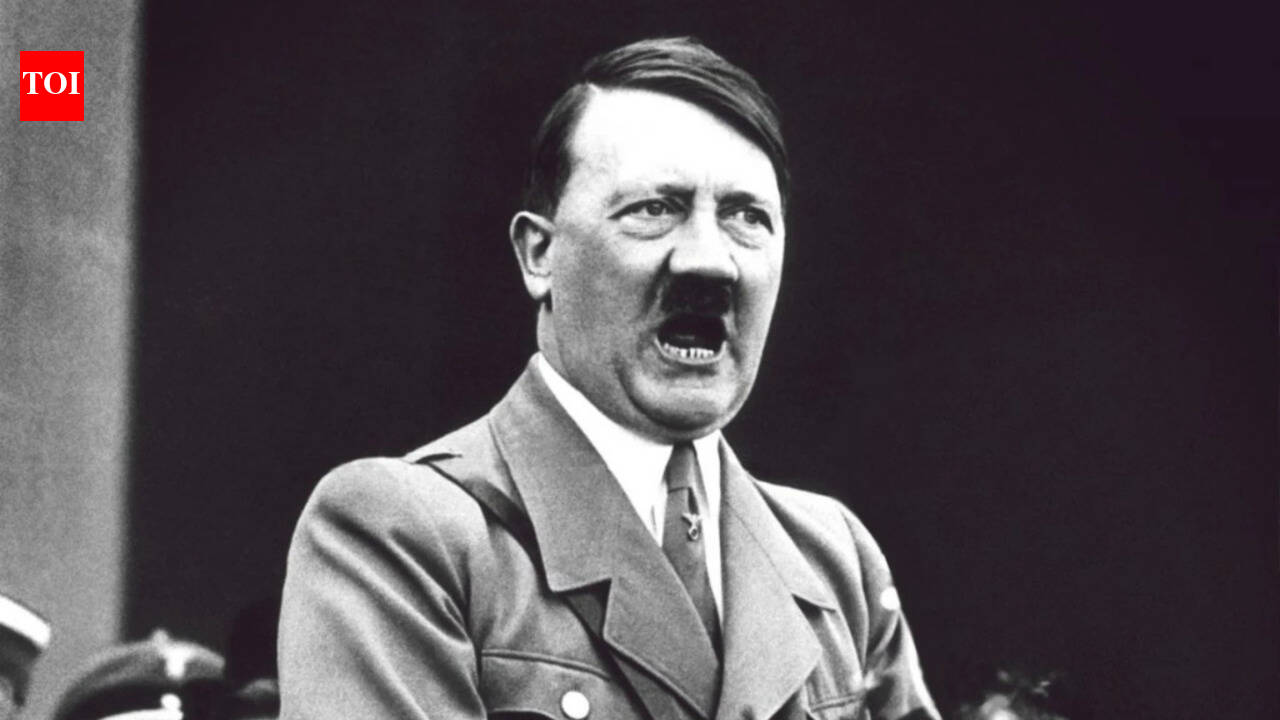

Talking on the latest World Convention on AI Safety and Ethics hosted by UNIDIR in Geneva, she pressured the significance of erecting efficient guardrails because the world navigates what’s steadily referred to as AI’s “Oppenheimer second” – in reference to Robert Oppenheimer, the US nuclear physicist greatest identified for his pivotal position in creating the atomic bomb.

Oversight is required in order that AI developments respect human rights, worldwide regulation and ethics – significantly within the discipline of AI-guided weapons – to ensure that these highly effective applied sciences develop in a managed, accountable method, the UNIDIR official insisted.

Flawed tech

AI has already created a safety dilemma for governments and militaries all over the world.

The twin-use nature of AI applied sciences – the place they can be utilized in civilian and navy settings alike – signifies that builders might lose contact with the realities of battlefield situations, the place their programming might value lives, warned Arnaud Valli, Head of Public Affairs at Comand AI.

The instruments are nonetheless of their infancy however have lengthy fuelled fears that they may very well be used to make life-or-death choices in a battle setting, eradicating the necessity for human decision-making and accountability. Therefore the rising requires regulation, to make sure that errors are prevented that might result in disastrous penalties.

“We see these methods fail on a regular basis,” stated David Sully, CEO of the London-based firm Advai, including that the applied sciences stay “very unrobust”.

“So, making them go fallacious is just not as tough as individuals generally suppose,” he famous.

A shared accountability

At Microsoft, groups are specializing in the core rules of security, safety, inclusiveness, equity and accountability, stated Michael Karimian, Director of Digital Diplomacy.

The US tech big based by Invoice Gates locations limitations on real-time facial recognition know-how utilized by regulation enforcement that might trigger psychological or bodily hurt, Mr. Karimian defined.

Clear safeguards have to be put in place and companies should collaborate to interrupt down silos, he advised the occasion at UN Geneva.

“Innovation isn’t one thing that simply occurs inside one group. There’s a accountability to share,” stated Mr. Karimian, whose firm companions with UNIDIR to make sure AI compliance with worldwide human rights.

Oversight paradox

A part of the equation is that applied sciences are evolving at a tempo so quick, international locations are struggling to maintain up.

“AI improvement is outpacing our potential to handle its many dangers,” stated Sulyna Nur Abdullah, who’s strategic planning chief and Particular Advisor to the Secretary-Basic on the Worldwide Telecommunication Union (ITU).

“We have to deal with the AI governance paradox, recognizing that laws generally lag behind know-how makes it a should for ongoing dialogue between coverage and technical consultants to develop instruments for efficient governance,” Ms. Abdullah stated, including that growing international locations should additionally get a seat on the desk.

Accountability gaps

Greater than a decade in the past in 2013, famend human rights knowledgeable Christof Heyns in a report on Deadly Autonomous Robotics (LARs) warned that “taking people out of the loop additionally dangers taking humanity out of the loop”.

At the moment it’s no easier to translate context-dependent authorized judgments right into a software program programme and it’s nonetheless essential that “life and loss of life” choices are taken by people and never robots, insisted Peggy Hicks, Director of the Proper to Growth Division of the UN Human Rights Workplace (OHCHR).

Mirroring society

Whereas large tech and governance leaders largely see eye to eye on the guiding rules of AI defence methods, the beliefs could also be at odds with the businesses’ backside line.

“We’re a personal firm – we search for profitability as properly,” stated Comand AI’s Mr. Valli.

“Reliability of the system is typically very onerous to search out,” he added. “However while you work on this sector, the accountability may very well be monumental, completely monumental.”

Unanswered challenges

Whereas many builders are dedicated to designing algorithms which are “honest, safe, sturdy” in line with Mr. Sully – there isn’t any street map for implementing these requirements – and firms might not even know what precisely they’re making an attempt to realize.

These rules “all dictate how adoption ought to happen, however they don’t actually clarify how that ought to occur,” stated Mr. Sully, reminding policymakers that “AI continues to be within the early phases”.

Massive tech and policymakers have to zoom out and mull over the larger image.

“What’s robustness for a system is an extremely technical, actually difficult goal to find out and it’s at present unanswered,” he continued.

No AI ‘fingerprint’

Mr. Sully, who described himself as a “large supporter of regulation” of AI methods, used to work for the UN-mandated Complete Nuclear-Take a look at-Ban Treaty Group in Vienna, which displays whether or not nuclear testing takes place.

However figuring out AI-guided weapons, he says, poses an entire new problem which nuclear arms – bearing forensic signatures – don’t.

“There’s a sensible drawback by way of the way you police any form of regulation at a world degree,” the CEO stated. “It is the bit no one needs to handle. However till that’s addressed… I believe that’s going to be an enormous, big impediment.”

Future safeguarding

The UNIDIR convention delegates insisted on the necessity for strategic foresight, to know the dangers posed by the cutting-edge applied sciences now being born.

For Mozilla, which trains the brand new era of technologists, future builders “ought to pay attention to what they’re doing with this highly effective know-how and what they’re constructing”, the agency’s Mr. Elias insisted.

Lecturers like Moses B. Khanyile of Stellenbosch College in South Africa consider universities additionally bear a “supreme accountability” to safeguard core moral values.

The pursuits of the navy – the supposed customers of those applied sciences – and governments as regulators have to be “harmonised”, stated Dr. Khanyile, Director of the Defence Synthetic Intelligence Analysis Unit at Stellenbosch College.

“They have to see AI tech as a software for good, and subsequently they need to turn into a power for good.”

International locations engaged

Requested what single motion they might take to construct belief between international locations, diplomats from China, the Netherlands, Pakistan, France, Italy and South Korea additionally weighed in.

“We have to outline a line of nationwide safety by way of export management of hi-tech applied sciences”, stated Shen Jian, Ambassador Extraordinary and Plenipotentiary (Disarmament) and Deputy Everlasting Consultant of the Folks’s Republic of China.

Pathways for future AI analysis and improvement should additionally embrace different emergent fields corresponding to physics and neuroscience.

“AI is sophisticated, however the actual world is much more sophisticated,” stated Robert in den Bosch, Disarmament Ambassador and Everlasting Consultant of the Netherlands to the Convention on Disarmament. “For that motive, I might say that additionally it is essential to have a look at AI in convergence with different applied sciences and specifically cyber, quantum and area.”